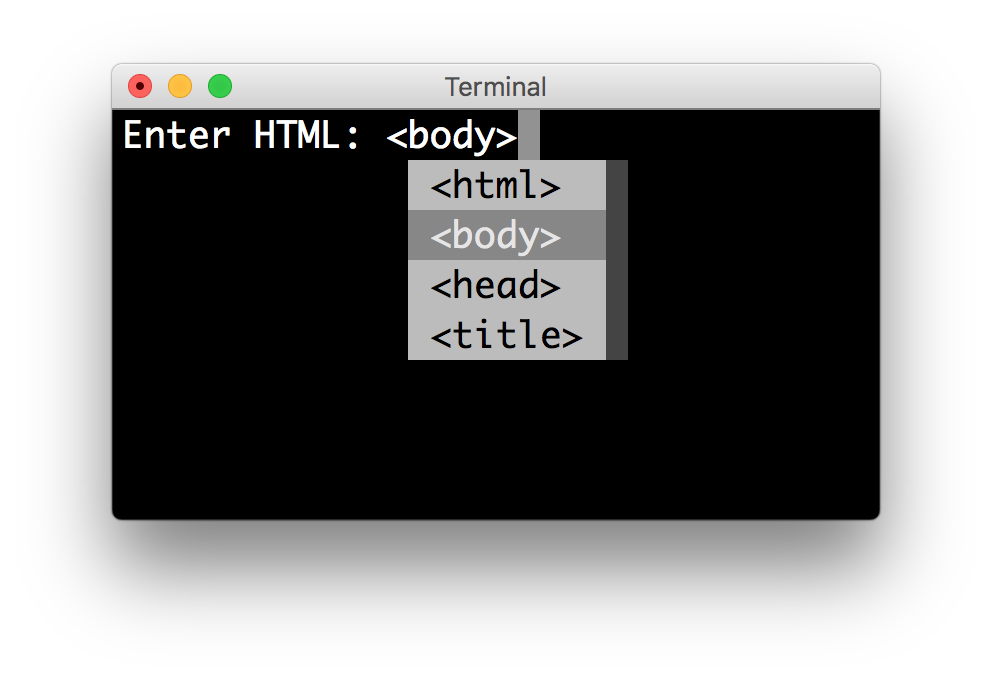

In a nutshell, what the fast Autocomplete does is: Read about how fast-autocomplete works here: We are also doing Levenshtein Edit distance using a C library so it improves there too. Regarding #1: Yes, but you are using caching.While Elasticsearch's autocomplete needs that whole sentence to be fed to it to show it in Autocomplete results. For example Fast Autocomplete can handle 2018 Toyota Camry in Los Angeles when the words 2018, Toyota Camry, Los Angeles are seperately fed into it. Elasticsearch's Autocomplete suggestor does not handle any sort of combination of the words you have put in.Once we switched to Fast Autocomplete, our average latency went from 120ms to 30ms so an improvement of 3-4x in performance and errors went down to zero.This library was written when we came to the conclusion that Elasticsearch's Autocomplete suggestor is not fast enough and doesn't do everything that we need: Read about why fast-autocomplete was built here: The results are cached via LFU (Least Frequently Used). ".Fast autocomplete using Directed Word Graph (DWG) and Levenshtein Edit Distance. In get_TREx_parameters function, set data_path_pre to the corresponding data path (e.g.Set synthetic to True for perturbed sentence evaluation for Relation Extraction.Set use_ctx to True if running evaluation for Relation Extraction.Anything evaluating both BERT and RoBERTa requires this field to be common_vocab_cased_rob.txt instead of the usual common_vocab_cased.txt. Update the common_vocab_filename field to the appropriate file.Uncomment the settings of the LM you want to evaluate with (and comment out the other LM settings) in the LMs list at the top of the file.Note: each of the configurable settings are marked with a comment. To change evaluation settings, go to scripts/run_experiments.py and update the configurable values accordingly. Update the data/relations.jsonl file with your own automatically generated prompts 3. Mkdir pre-trained_language_models/roberta For BERT, stick and to each end of the template. BERT or RoBERTa) you choose to generate prompts, the special tokens will be different. Each trigger token in the set of trigger tokens that are shared across all prompts is denoted by. denotes the placement of a special token that will be used to "fill-in-the-blank" by the language model. The example above is a template for generating fact retrieval prompts with 3 trigger tokens where is a placeholder for the subject in any (subject, relation, object) triplet in fact retrieval. Generating Prompts Quick Overview of TemplatesĪ prompt is constructed by mapping things like the original input and trigger tokens to a template that looks something like We also excluded relations P527 and P1376 because the RE baseline doesn’t consider them. Trimmed the original dataset to compensate for both the RE baseline and RoBERTa.trex: We split the extra T-REx data collected (for train/val sets of original) into train, dev, test sets.original_rob: We filtered facts in original so that each object is a single token for both BERT and RoBERTa.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed